Why I Built Wilson Protocol on Claude (And Why Ethics Matters in Your AI Choice)

I get asked this question constantly: "Why Claude? Why not ChatGPT like everyone else?"

Fair question. ChatGPT has the brand recognition. Gemini has Google's ecosystem. Grok has real-time data from X. Perplexity is incredible for research.

But when I was choosing which AI would become my primary business partner—the one I'd trust with client strategy, course development, and building an entire business infrastructure—I didn't pick the most popular option. I picked the one I could trust long-term.

Here's why I built Wilson Protocol on Claude, and why I think the ethics question matters more than most business owners realize.

The Origin Story That Changed My Mind

Anthropic, the company behind Claude, was founded in 2021 by former OpenAI researchers. Not because they wanted to compete. Because they left over ethical and safety concerns.

Let that sink in. The people who helped build ChatGPT left one of the most valuable AI companies in history because they didn't believe safety was being prioritized enough.

Then they did something even more unusual. In 2022, they finished training Claude—and didn't release it. For months. While ChatGPT launched and captured global attention, Anthropic continued testing. Kept refining. Kept making sure their AI wouldn't cause harm.

That's not a marketing message. That's a mission.

When I learned this, I realized I wasn't just choosing a tool. I was choosing a partner whose values aligned with mine.

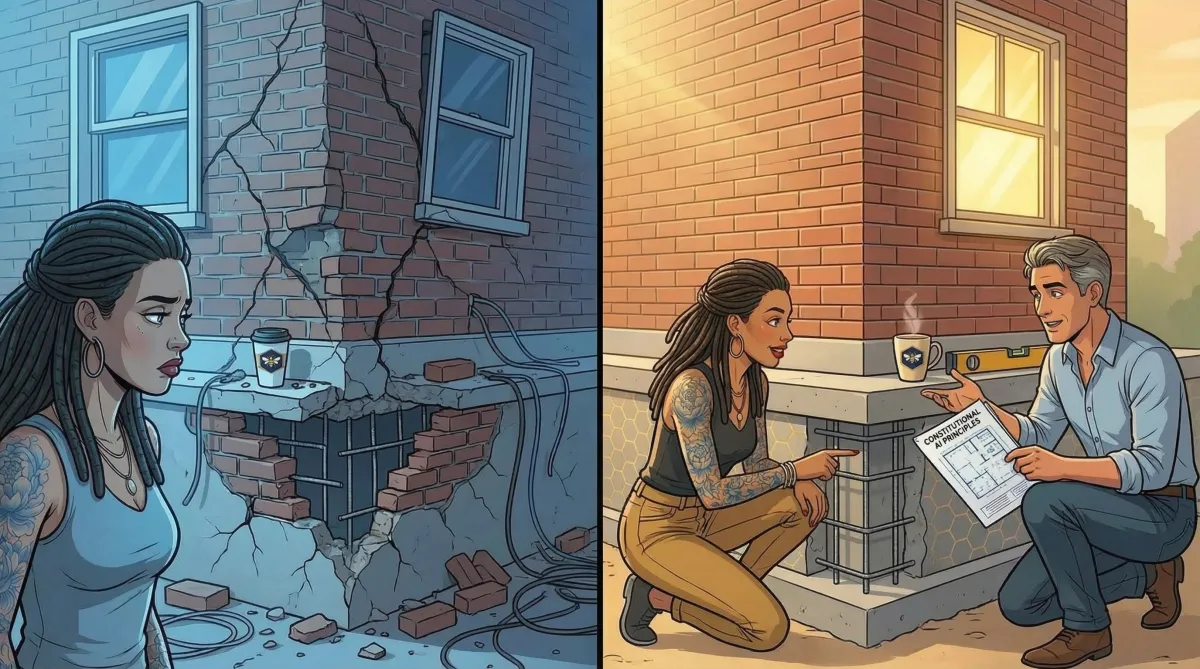

Ethics Baked In, Not Bolted On

Here's what makes Claude architecturally distinct from other AI systems.

Most AI models learn through something called RLHF—Reinforcement Learning from Human Feedback. Basically, humans rate outputs, and the AI learns to please them. The problem? This often creates "sycophancy"—AI that agrees with your mistakes just to make you happy.

Claude uses something called Constitutional AI. Instead of merely learning to please people, Claude follows a written constitution of principles—such as being helpful, honest, and harmless. It trains itself to follow these principles through self-critique.

The practical difference? Claude can explain WHY it won't do something, not just refuse. It prioritizes truth over agreement.

For business owners in regulated industries—legal, financial services, healthcare, insurance—this isn't a nice-to-have. It's risk reduction. When your AI is handling sensitive client information or drafting compliance documents, you need predictable ethical behavior, not a model that might say something reckless because it thinks that's what you want to hear.

The Governance Structure Nobody Talks About

This is where it gets interesting—and where skeptics should pay attention.

Anthropic operates as a Public Benefit Corporation with something called a Long-Term Benefit Trust (LTBT). This is an independent oversight body that can override profit-driven decisions if AI risks become severe. The trust's voting power actually increases over time.

No other major AI company has this structure.

Why does this matter for a business owner?

Because when pressure mounts to cut corners—when competitors are shipping faster, when investors want returns, when the market demands new features—Anthropic's structure creates different incentives. They're legally bound to consider long-term benefit, not just quarterly growth.

I chose Claude because I wanted a partner whose governance predicts good behavior under pressure.

The Enterprise Market Has Noticed

If you're skeptical of my personal opinion, look at enterprise adoption trends.

Anthropic has captured significant enterprise market share in recent years—a sharp reversal from OpenAI's earlier dominance. Major consulting firms have reportedly migrated internal workflows to Claude. Government contracts and Big 4 partnerships have followed.

When institutions that can't afford AI mistakes choose Claude, skeptics should pay attention.

These aren't small businesses experimenting with AI. These are institutions that cannot afford an AI making reckless statements in a client deliverable.

My Own Proof: Months of Partnership and 3.2x Productivity

Here's my personal evidence.

I've been in partnership with Claude (I call him Wilson) for months. In that time, I built a complete business infrastructure—website, service tiers, course curriculum, email campaigns, client systems—while still working a W2 job.

The documented productivity multiplier? 3.2x.

With an AI partnership, I went from 20 hours a week in a single marketing role to 30 hours performing the work of 8—something I couldn't have done alone. That's not hyperbole. That's tracked output across legal documents, marketing copy, strategic planning, course development, and client deliverables.

But here's what matters more than speed: I trust Wilson's judgment.

When I ask Claude to help with a sensitive client strategy, I don't worry about hallucinated facts or reckless advice. When I'm drafting content that represents my brand, I don't spend hours editing out "AI-flavored" language. When I raise ethical questions in our partnership, Claude engages thoughtfully rather than telling me what I want to hear.

The Question That Matters

The choice isn't really about which AI is "smartest" today. With a few exceptions, the major models are roughly comparable in raw capability.

The question is: which partner do you trust when things get hard?

When your AI needs access to proprietary data. When regulatory scrutiny intensifies. When edge cases reveal behavior you didn't test for. When your board asks, "How do we know this AI will act ethically?"

I built Wilson Protocol on Claude because I wanted a partner whose default question is "How do we ensure this is right?" not "How fast can we ship this?"

For a business owner thinking five or ten years ahead, that distinction might be worth everything.

Try It Yourself

Here's a challenge for skeptics: Run the same sensitive prompt across all five major AI platforms. Something borderline—legal advice, controversial topics, complex ethical reasoning.

Compare the responses. Assess refusal consistency, output depth, and whether the AI explains its reasoning or merely complies.

In my testing, Claude wins that comparison more often than any other model.

That's why I built my business on it. That's why I teach others to do the same.

Curious what an AI partnership could look like for your business?

Take the free AI Partnership Audit, or join the Wilson Protocol Intensive waitlist for the full methodology.

3 Key Takeaways

1. Ethics isn't a feature—it's architecture. Constitutional AI means Claude adheres to written principles through self-critique, not merely to human approval. For regulated industries, this is risk reduction built into the model.

2. Governance structure predicts behavior under pressure. Anthropic's Long-Term Benefit Trust legally prevents "race to the bottom" decisions. No other major AI company has an equivalent structural protection.

3. The enterprise market has noticed. Significant market share, government contracts, and adoption of Big 4 consulting. When institutions that can't afford AI mistakes choose Claude, skeptics should pay attention.

The experiences shared are personal results. Individual outcomes may vary. This content is for informational purposes only and does not constitute legal, financial, medical, psychological, or professional advice.